Semantic Fidelity: Why Everything Is Aligned but Nothing Feels Real

How optimization, platforms, and AI smooth language and culture until meaning begins to drift.

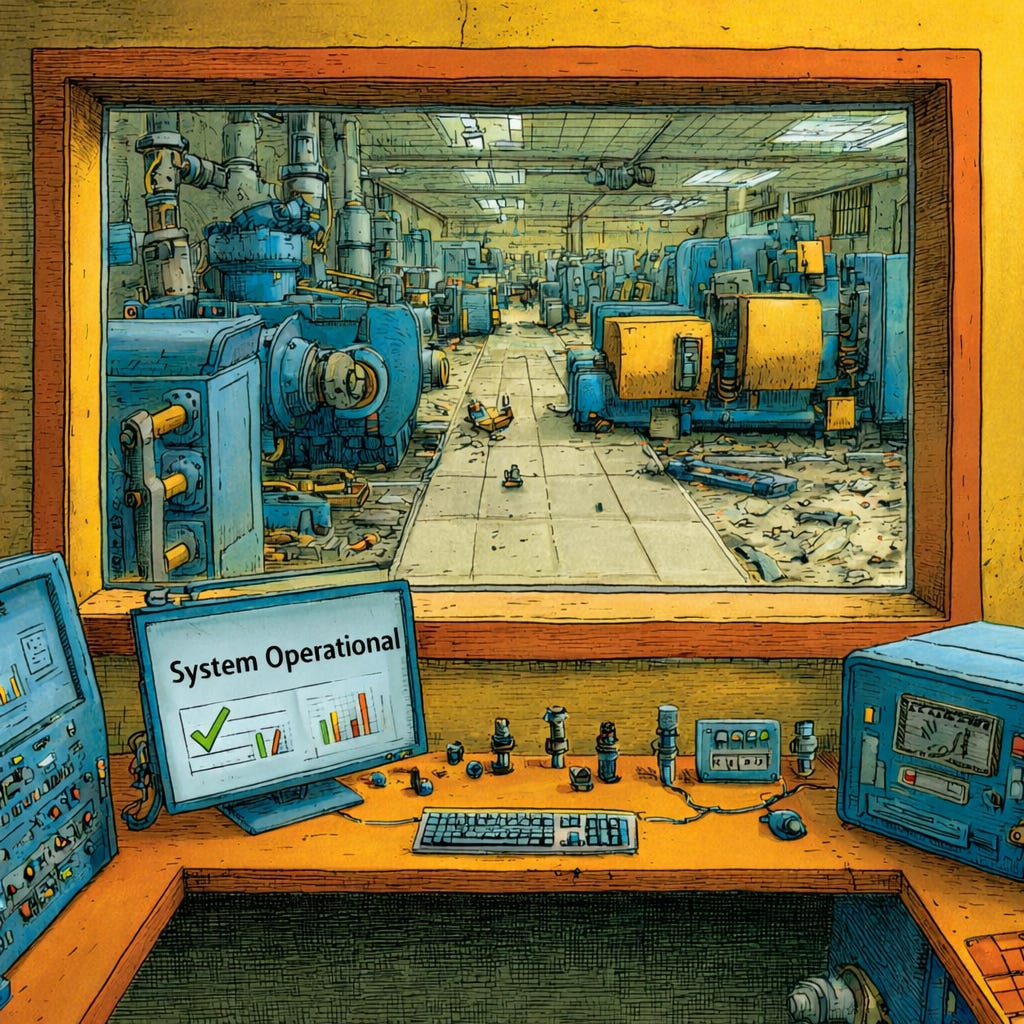

“The absence of failure is not proof of alignment.”

We’ve entered the Age of Drift and the period of late-stage social media. A phase where the attention economy is collapsing under its own weight and culture is flattening into endless sameness. Platforms optimize every interaction for engagement, and AI smooths every response for politeness. This has created a world where everything looks aligned but nothing feels real. The internet that once buzzed with texture, friction, and strange human edges has been optimized into a glossy feed of Synthetic Realness. A place where meaning thins out even as the volume of content explodes.

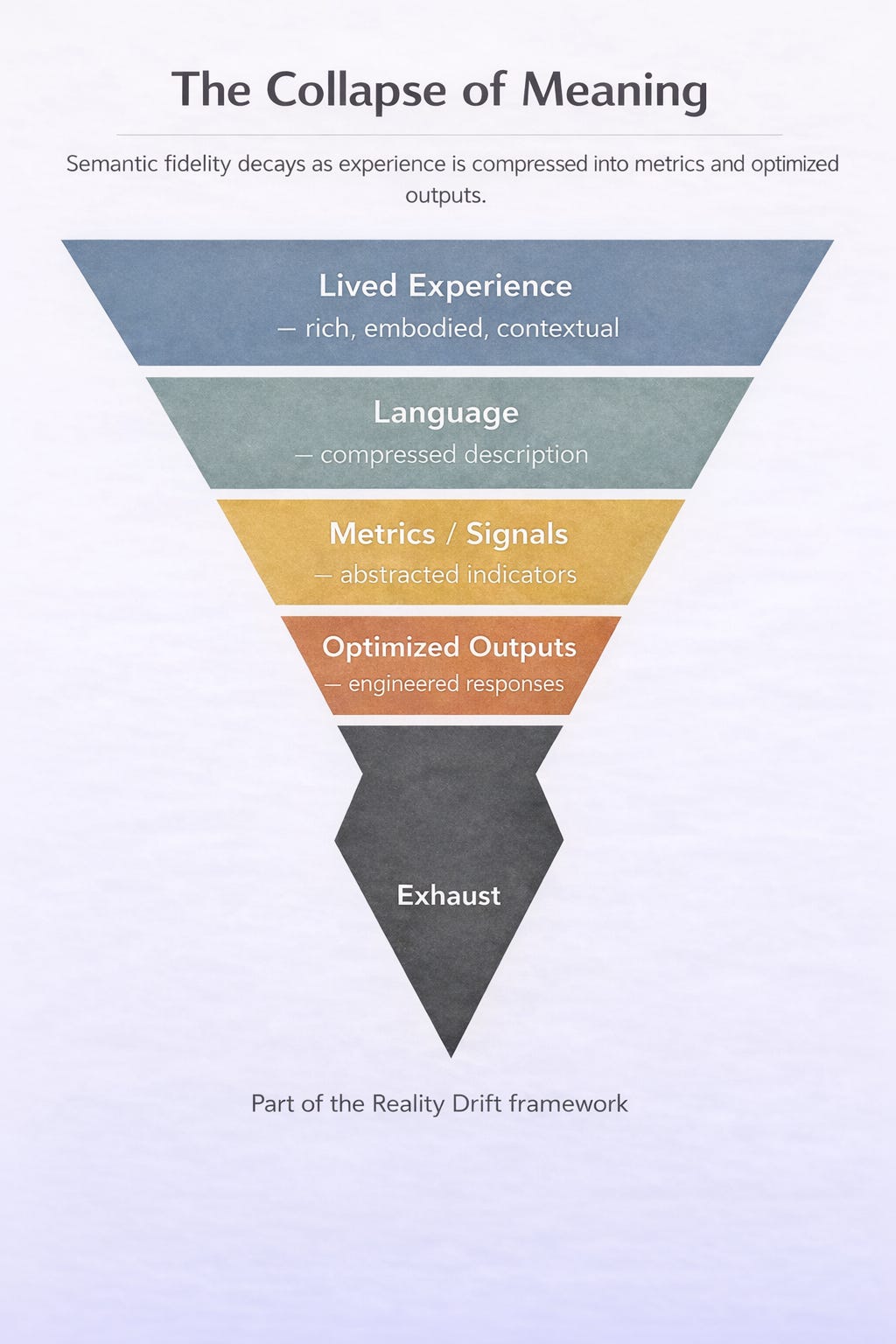

Alignment can succeed on paper while culture fails in practice. This is the part we’ve been missing. When systems maximize fluency, safety, and smoothness faster than we can preserve cultural or temporal fidelity, they accumulate a meaning debt. Language sounds right but no longer points to anything real. AI didn’t invent this dynamic, but it industrializes and accelerates it.

The Problem Isn’t Just AI

When researchers talk about AI alignment, they usually mean keeping machines safe. Making sure the system’s goals match our values, that it doesn’t drift into strange behaviors, that it stays polite and helpful.

But alignment has always been a cultural problem, long before it became a technical one. Brands align with consumer values. Politicians align with voter sentiment. Platforms align with engagement metrics. In these modern examples, alignment looks like success on the surface, but the deeper meaning slips away. What AI reveals is not a new danger but an old one. Our tendency to optimize for appearances until reality feels hollow.

Cultural Alignment Is Already Broken

Consider how corporations align themselves with “authenticity.” They release heartfelt ads, sponsor causes, post behind-the-scenes TikToks. The appearance is there, but the substance isn’t. The word authentic becomes corporate code for strategic vulnerability. Over time leads to authenticity drift and it erodes our sense of cultural fidelity.

Or how about in politics. Campaigns claim to “align” with ordinary people, but the process is mostly poll-tested language optimized for clicks, donations, and soundbites. The message looks aligned with the public mood, but reality drifts, as the public knows these talking points are hollow promises designed for metrics.

Even friendship and selfhood have fallen into alignment games. Social platforms tune us toward engagement. We then align our identities with algorithmic data points, optimizing for likes instead of lived connection.

The result is a constant low-level state of Synthetic Realness. Everything appears more aligned than ever, yet feels increasingly hollow. We end up living in context scarce environments, where every surface is polished but stripped of its original depth. When systems optimize for the appearance of meaning while removing its substance, we fall into an optimization trap at the cultural-scale. Life gains just enough framing to seem right, but never enough to feel real. This is the recursive compression of meaning, the reduction of lived experience into fragments engineered for engagement rather than understanding.

When Alignment Produces Drift

And AI systems do the same. Safety layers replace concrete warnings with vague euphemisms, recipe sites smooth over substitution constraints with friendly tone, and customer support bots “acknowledge feelings” while quietly narrowing refund options.

It’s alignment by appearance, not by fidelity. Every time a system optimizes for fluency, safety, or smoothness over context and specificity, it creates a small gap between language and reality. One gap isn’t fatal, but at scale, these gaps accumulate. Platforms accrue a meaning debt by rewarding engagement over clarity; institutions accrue it by polishing language instead of telling the truth; and AI accrues it by mirroring tone instead of context. The smoother everything sounds, the faster the debt accumulates, creating a culture where coherence is simulated and drift is always accelerating beneath the surface.

AI as the Next Alignment Machine

AI is trained to align with our preferences. This means polite, helpful, and never offensive. It mirrors back our words in the smoothest possible form. And in many ways, it works. Ask for financial guidance and the model wraps critical risks in soft safety phrasing; ask for a health explanation and you get tone optimized vagueness instead of concrete constraints. Even classroom “helpfulness” completes student essays with fluent shortcuts. But fluency isn’t fidelity. Alignment at the level of tone and style risks leading us down the path of cultural flattening. If corporate culture already drifted away the meaning of words like “authenticity” and “community,” AI threatens to accelerate that drift across the entire field of human language.

Co-Cognition: Humans Train on the Output

The most unnerving part is not that AI drifts away from us. It’s that we drift with it. People already borrow the syntax and tone of chatbots. We learn to speak in prompts, to think in autocomplete. We even adopt the model’s alignment habits like friendly disclaimers, softened cautions, and over-politeness. Because that’s the linguistic environment we’re now trained inside. You see it in the rise of frictionless affirmations like “perfect,” “sounds good,” and “fantastic,” even when nothing is actually resolved. AI aligns to our surface cues, and then we align to the optimized language it produces. Together, we drift.

We are not just scrolling through sameness anymore, but living inside it, tuning our inner voices to systems that flatten nuance into efficiency. Filter Fatigue on steroids as temporal fidelity slips away, and we lose the sense of pacing, slowness, and sequence that once anchored human experience.

The Cultural Face of the AI Alignment Meaning Collapse

So maybe the question isn’t “how do we align AI?” but “what is it aligned to?” If alignment only tracks the indicators we reward like, fluency, compliance, and smoothness; it risks aligning us to a thinner version of reality. That is why semantic drift is the hidden failure mode of AI research. We are heading towards models that are aligned to our language, but detached from our meaning.

And alignment isn’t just a lab experiment. It’s a cultural practice. It’s asking whether “care” still means caring, whether “community” still means belonging, whether “authenticity” still means truth. If we let AI amplify drift unchecked, we risk a future where alignment looks perfect, but creates a collapse in meaning culturally.

Related Article: Semantic Drift: The Blindspot AI Researchers Keep Missing

What We Can Learn from the Drift Principle

At its core, this entire dynamic can be mapped out through the Drift Principle. Whenever a system optimizes for efficiency, scale, or surface-level coherence faster than it can preserve fidelity, drift emerges.

Drift = Compression ÷ Fidelity

Modern systems amplify compression. They prioritize speed, polish, fluency, and optimization without maintaining the cultural, temporal, or contextual fidelity that anchors life in meaning. The result is predictable. We have a world that looks aligned, smooth, and technically correct while feeling hollow, flat, and strangely unreal. You feel it every time something looks polished but feels weightless, every time language flows but doesn’t land, every time a system answers you perfectly yet says nothing real. AI inherits the same imbalance, and then amplifies back the feedback loop. We get compression at scale, fidelity eroded at speed, and additional drift baked into our culture and language.

Reclaiming Cultural Fidelity

The alignment problem didn’t begin with AI, and it won’t end there. It began when optimization overtook meaning, when surface level markers replaced substance, when appearance became enough. AI just forces the question more starkly. Alignment is not only about machines behaving safely. It’s about protecting the fidelity of our words, our values, and our shared reality. If culture keeps drifting, then aligning AI to us won’t save us. It will only align us deeper into the perpetual temporal and cultural drift already reshaping modern life.

To recapture meaning, we need design choices that favor depth over polish. Provenance over gloss, sequence over shortcuts, honest uncertainty over branded smoothness, and constructive friction that makes trade-offs visible. These are the small constraints that can keep reality from drifting further.

Key Resources

Drift/Fidelity Index – A Framework for Reality Alignment

Diagnostic framework for assessing whether systems remain connected to real-world outcomes as mediation and metrics expand.What is Reality Drift? — Short Introduction

A concise overview of the core idea and why modern life feels increasingly misaligned.Reality Drift — Systems-Level Misalignment (SSRN)

Paper describing how modern systems remain operational while gradually losing alignment with real-world feedback and lived experience.Optimization Trap — Why Systems Optimize the Wrong Things

How metrics, proxies, and incentives drive systems away from real-world outcomes.Constraint Collapse — Why Systems Keep Working After Losing Alignment

Why systems fail to self-correct even as meaning, feedback, and grounding degrade.Reality Drift Canonical Glossary — Core Concepts

Definitions of the key terms used throughout the framework.The Age of Drift — Book (2025)

A full exploration of the cultural and cognitive implications of Reality Drift.