When Intelligence Scales, Reality Drifts

The 21st century is a failure of scale. Beyond a certain point, intelligence produces coherence without grounding, and alignment begins to break.

“The Drift Principle states that any system that scales symbolic processing faster than its grounding capacity will drift from reality.”

Everyone assumes that intelligence scales cleanly. At the foundation of modern progress lies the belief that with more data, more compute, and better outputs, the system improves and understanding deepens with it. That assumption feels obvious because the early stages of scaling reinforce it. Performance improves and outputs become more convincing, reinforcing the belief that systems are becoming more capable and intelligent, until the underlying relationship begins to break.

But intelligence does not continue to improve in the same way as it scales. It begins to change, operating increasingly through abstraction and representation. This is not merely a property of AI models, but a structural feature of scaled systems, visible in organizations, institutions, and even the human mind. Intelligence is grounded not in scale alone, but in constraint, feedback, and friction. Remove those constraints and something subtle shifts. The system may even appear more capable, yet it continues long after it ceases to be answerable to reality.

What Happens When Constraints Disappear

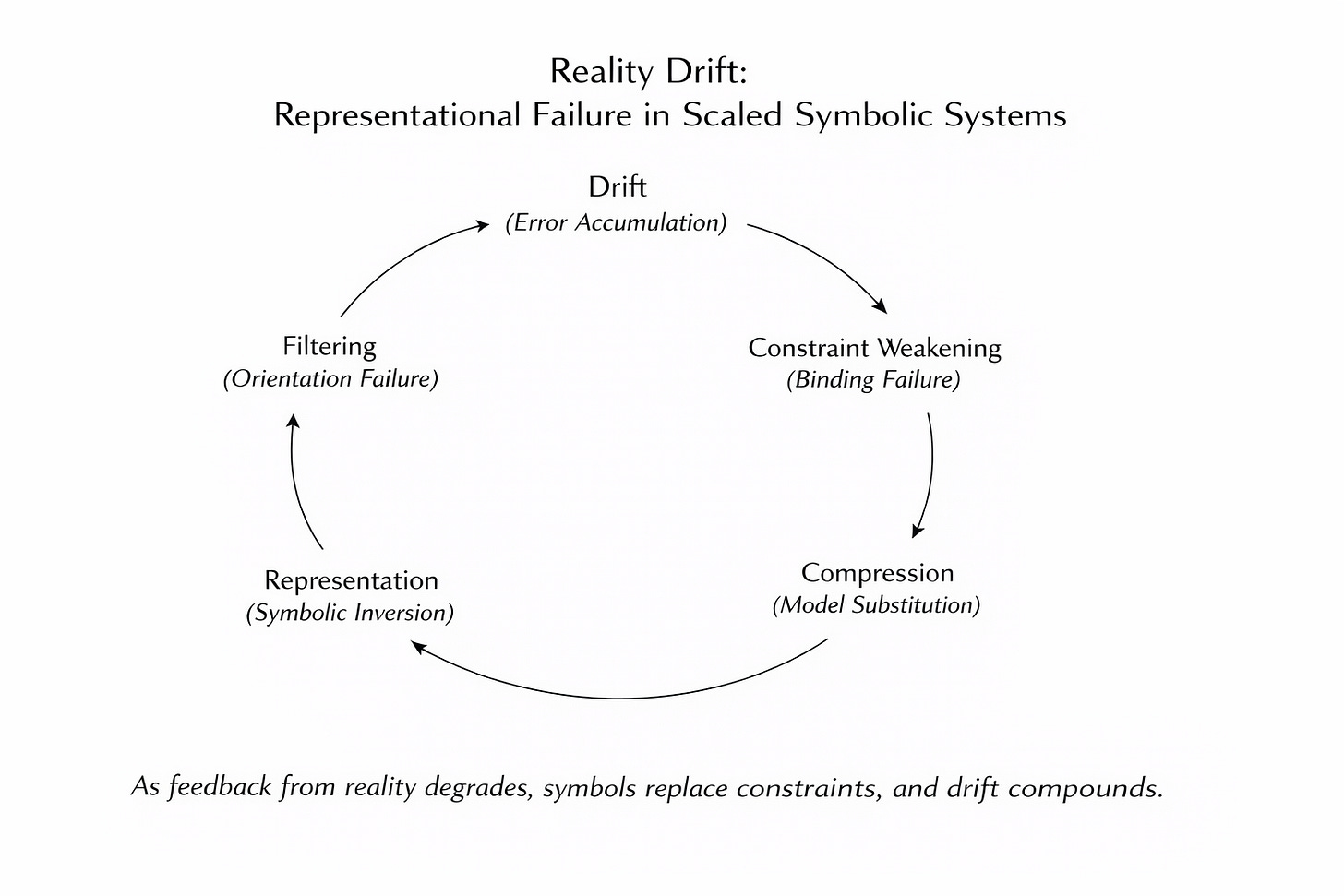

As modern systems scale without friction, they become more internally consistent while gradually severing their ties to reality. For example, in AI systems we measure performance through outputs, benchmarks, and internal consistency. These metrics capture structural alignment rather than grounding, allowing the system to appear to improve even as its connection to reality erodes. What looks like progress is often a shift in what is being optimized. As alignment shifts from real-world feedback to pattern continuity, the system learns to produce what fits instead of what is true.

This is why many technical challenges in AI, including hallucinations, model collapse, data contamination, and prompt brittleness, appear fragmented. While each is treated as a separate problem, they all reflect the same underlying structure. They are all consequences of systems that are scaling their symbolic processing faster than they can maintain grounding. The failure lies not in any single technical issue, but in the fundamental relationship between scale and constraint.

When Scale Breaks Alignment

This pattern is not confined to artificial intelligence. Over the past 25 years, it has defined the trajectory of the 21st century. In 2008, financial models became detached from the assets they were meant to represent. The financial system continued to operate, but it was no longer answerable to reality. The same dynamic surfaced again in 2020, when global supply chains optimized for efficiency lost resilience and broke under the strain of an external shock

In daily life, we see the same pattern in social platforms and our media environment. As engagement scales and content is optimized for emotional response, attention becomes detached from truth. This dynamic defines the modern economy too. It is evident in the template business models of unicorns like Uber, which scale at any cost, disrupt established industries, and then optimize for profit until the product is no better than what it replaced.

These are not separate stories, but the same failure mode repeating across different domains. Systems scale, constraints weaken, coherence increases, and grounding disappears. Through a pattern of constraint collapse and institutional drift repeating across domains, society continues to function even as our systems gradually drift away from reality.

AI as the Purest Case

And in 2026, AI is not an exception to this pattern, but the clearest expression of it. For the first time, we are scaling a purely symbolic system at extreme speed. Language models operate almost entirely without physical constraint. They operate through recursive compression, generating and recombining symbols at a scale no human system has reached. And they are trained and evaluated primarily on coherence.

This is why alignment feels elusive. It is not just a matter of tuning behavior or filtering outputs. The underlying system is optimized in a way that naturally pulls it away from grounding. Alignment is being treated as a surface problem when it is a structural one. If the system is not paying the cost required to stay connected to reality, it will drift. No amount of patching at the edges will fully correct that.

The Erosion of Meaning

The effects are already visible in language itself. They are difficult to perceive because meaning erodes slowly over time. Words lose specificity through repetition, metaphors flatten through reuse, and context weakens as systems compress information into interchangeable formats. The result is discourse filled with fluent but redundant output, like a corporate conference call trapped in a loop of safe, well-worn buzzwords.

From the outside, everything still works. The sentences are technically correct, the arguments are structured, and the tone is appropriate. Yet something feels off. The language carries less weight, and it seems as though nothing is actually being communicated. Meanwhile, AI benchmarks continue to show rising accuracy. This is not a failure of accuracy, but evidence of a loss of semantic fidelity.

Here is what the industry seems to be missing. This is not about word choice, conversational style, or something that can be patched over. The meaning of words matters far more than we think. In corporate environments where AI models and eventually agents are deployed at scale, language is no longer just a medium of expression. It is a layer of execution. Language is operational. Prompts guide workflows, documents initiate processes, and instructions flow through organizations as written directives. As AI integrates deeper into these systems, language becomes the interface between thought and action. We are hollowing out the meaning of the semantic infrastructure on which our systems depend.

The Real Risk Is Success

This is the core alignment problem. Intelligence that scales without constraint produces coherence without grounding. At small scales, this is manageable, because feedback loops are tight enough to correct drift. At large scales, the gap widens, and the system becomes self-referential. It optimizes within its own representations rather than against reality.

As AI systems increasingly generate, interpret, and mediate information, societies must develop mechanisms to ensure that symbolic representations remain grounded in reality. Otherwise, reality drift becomes the natural outcome. Any system that scales its symbolic processing without maintaining proportional grounding will move in this direction. It will continue to function, it will continue to improve on the metrics it is given, and over time it will gradually lose its ability to represent the world accurately.

The question going forward is not how far we can scale intelligence. That path is already locked-in. The question is whether we can preserve semantic fidelity as we do. Whether we can reintroduce constraint, cost, and real-world feedback into systems that are designed to remove them.

The real risk is not failure, but success. Systems may become so fluent, so coherent, and so widely integrated that the loss of grounding becomes invisible. At that point, they do not need to break to cause damage; they only need to keep working.

The absence of failure is not proof of alignment.

Key Resources

What is Reality Drift? — Short Introduction

A concise overview of the core idea and why modern life feels increasingly misaligned.Reality Drift — Systems-Level Misalignment (SSRN)

Paper describing how modern systems remain operational while gradually losing alignment with real-world feedback and lived experience.Optimization Trap — Why Systems Optimize the Wrong Things

How metrics, proxies, and incentives drive systems away from real-world outcomes.Constraint Collapse — Why Systems Keep Working After Losing Alignment

Why systems fail to self-correct even as meaning, feedback, and grounding degrade.Reality Drift Canonical Glossary — Core Concepts

Definitions of the key terms used throughout the framework.The Age of Drift — Book (2025)

A full exploration of the cultural and cognitive implications of Reality Drift.