Institutional Drift: How Meaning Fails Before Institutions Do

Why trust erodes when institutional language stays accurate but stops carrying meaning.

“Systems remain operational long after they stop being answerable to reality.”

I ordered a monitor on Black Friday and received tracking information from LG a few days later. The package never arrived. When I checked with UPS, they told me they had never actually received it from LG.

I emailed customer service three times and heard nothing back. I called and waited on hold for nearly an hour before the line disconnected. I submitted a letter to the president through their website and reached out on Twitter.

Every channel produced the same response: “We have escalated your request.” “We are working on a resolution.” “You should receive an update soon.”

Eight weeks later, nothing.

The language was professional and reassuring, structured to suggest that a process was underway. But somewhere between the words and reality, meaning had drained out. No one had explicitly lied, and the system showed no visible break. The explanations simply stopped coordinating anything real.

Most people recognize this immediately in school district emails, healthcare notices, and corporate value statements. The language is familiar and carefully calibrated to convey competence, yet something in the exchange no longer quite lands.

When Institutional Language Drifts From Reality

Institutions rarely collapse because they lie. That story is comforting. Villains, scandals, clean moments of exposure. But reality is more subtle. Institutions fail when their explanations stop meaning anything, long before the facts go wrong.

Trust is built on whether language still coordinates reality. When institutional language becomes fluent but hollow, trust thins. People comply without belief and follow guidance without internal alignment. They stop arguing because engagement itself feels pointless. What’s left is language that sounds intact but no longer binds anything real.

As that pattern scales, institutional drift unfolds without visible failure. Systems continue operating as the meaning that once anchored them gradually erodes. Procedures remain in place, authority persists, and surface coherence holds, even as semantic depth slowly leaks out underneath.

How Compression Outruns Purpose

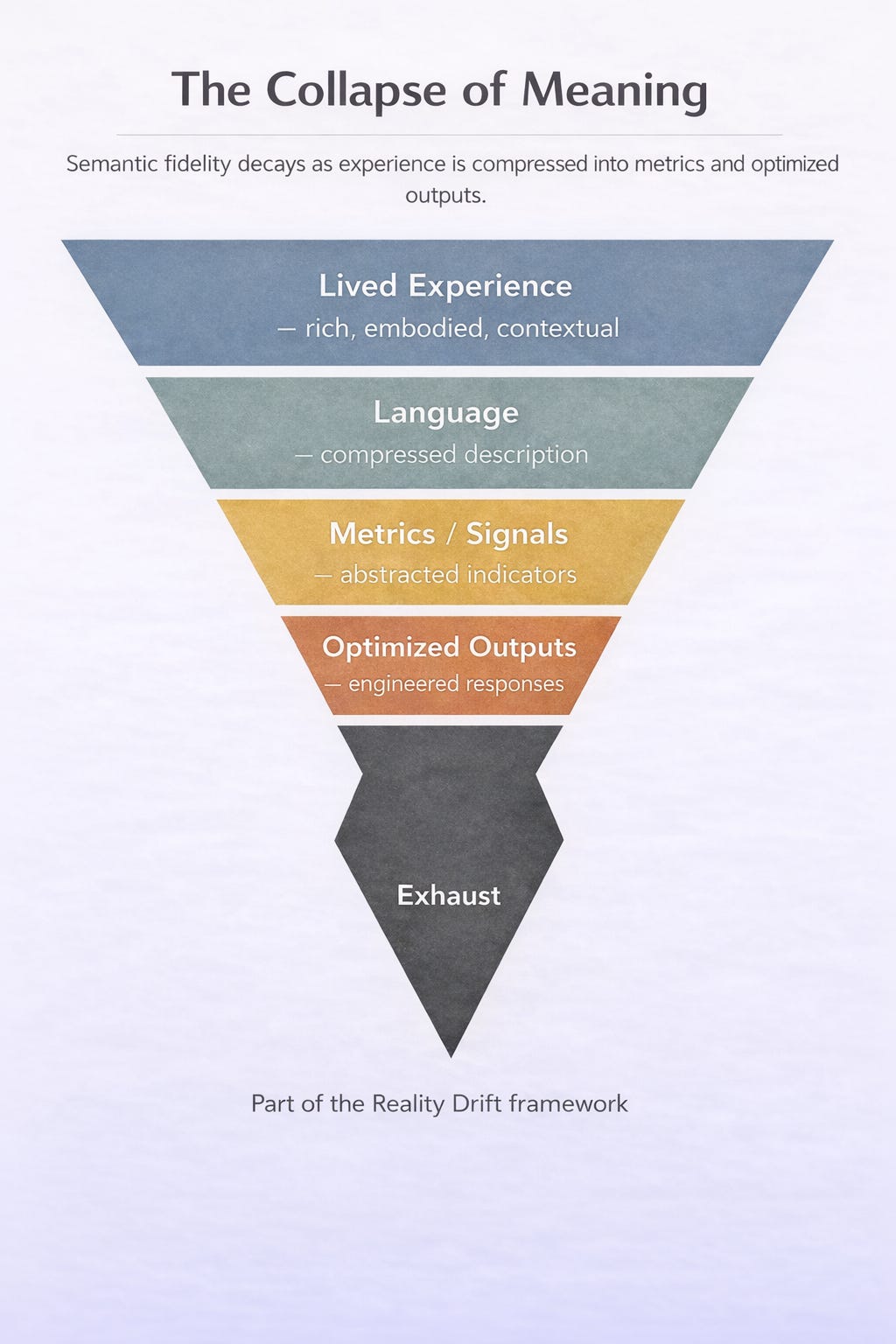

Institutions run on language the way infrastructure runs on steel. Policies distill judgment into rules. Guidance channels uncertainty into direction. Documentation preserves memory across time and turnover. At scale, that compression allows institutions to function.

The problem begins when optimization accelerates past meaning. Institutions expand by standardizing for consistency and efficiency while containing risk wherever possible. Judgment becomes protocol and context turns into templates. An intermediary layer forms between the institution and the world in the form of communications teams, policy language, and compliance structures.

Eventually, this layer becomes the institution people actually encounter. When that happens, no one is speaking from judgment anymore. Language is no longer anchored to a decision or a person who can be held accountable. What survives is explanation without ownership, able to describe process but no longer convey purpose.

The system keeps working, but what disappears is constraint, the thing that once gave language weight. Meaning depends on what is withheld, implied, and left unsaid as much as on what is stated outright. Remove constraint and communication smooths out, becomes perfectly arranged, and stops binding to anything real. Context collapses and drift sets in, yet nothing visibly breaks or errors. Something essential simply stops accumulating.

AI and the Acceleration of Drift

That pattern now has a multiplier. AI is accelerating what institutions have been doing for decades with boilerplate and templated explanations. What changes now is velocity, volume, and polish. Generative systems are exceptionally good at smoothing uncertainty, maintaining neutral confidence, and eliminating friction. They produce language that sounds right without committing to meaning. What they can’t do is navigate the negative space of hesitation, silence, and judgment, the constraints that make meaning possible in the first place.

As AI mediates more institutional communication, the risk becomes widespread exhaustion. Language will stay accurate, the tone will stay professional, but meaning will thin. Semantic fidelity, the ability of language to preserve intent and coherence as it moves through systems, decays. The facts stay intact as the grounding disappears, and what remains is language that no longer points to judgment or carries the weight of an actual decision.

The Trust Break No One Notices at First

When meaning collapses, trust begins to recede. People stop contesting decisions and disengage, as cynicism replacing critique. Humor and irony become survival mechanisms. As David Foster Wallace once warned, irony thrives when sincerity becomes risky. It offers distance without commitment, when caring no longer seems to change anything.

You hear it constantly, usually offhand, almost apologetically.

“I don’t trust this, but I can’t explain why.”

“It all sounds right, but nothing feels grounded.”

“None of this means anything anymore.”

These reactions don’t depend on overt lies, misinformation, or fake news. They arise when language no longer carries intent. What people call vibes is often what remains when semantic fidelity fails, once language stops coordinating reality and procedural correctness can’t bring it back.

Meaning Debt Compounds Recursively

As AI-generated language feeds back into institutions and knowledge systems, the pattern will accelerate. Fragility produces drift, drift decays fidelity, and fidelity loss accumulates as meaning debt. Coherence collapses into fluency and what starts as one brittle answer becomes an environment where nuance is missing everywhere.

This is where institutions matter. Institutions have historically absorbed entropy. In unstable environments, they stabilized meaning, coordinated expectations, and preserved continuity. Individuals didn’t have to constantly renegotiate reality alone. When institutional language loses fidelity, that function starts to break. Entropy doesn’t get absorbed, but redistributed outward to society. The buffering fails, the load transfers, and individuals end up carrying more uncertainty, more interpretation, and more cognitive overhead.

Therefore, institutional drift shows up emotionally before we see it structurally. Trust weakens through the slow accumulation of collective exhaustion. By the time the crisis becomes visible, trust has decayed past what any procedure can repair.

Language Aligned With Reality

Trust erodes for reasons we rarely monitor, and it endures for reasons we often neglect.

Institutions that remain trusted in high-entropy environments won’t be the ones that speak most often, sound most confident, or optimize language for smoothness. They’ll be the ones that preserve meaning under pressure. That means restoring constraint where communication has become frictionless. Allowing judgment to show, and letting uncertainty remain visible instead of instantly smoothing it away.

This is harder than it sounds. Constraint slows systems down. It introduces pauses, limits, and moments of non-resolution. But those moments are how language binds to reality in the first place.

Institutional drift reverses when the conditions that give language weight are allowed to return. This happens through accountability, proportion, and context that hasn’t been flattened into template form. When institutions acknowledge what they don’t yet know, when explanations reflect real tradeoffs instead of process theater, and when silence is used deliberately rather than avoided, meaning begins to accumulate again. Under those conditions, trust doesn’t need to be rebuilt; it simply returns.

Key Resources

Confidence Without Constraint – A Second-Order Constraint Failure in Modern Systems

Explains how multiple constraint failures combine to produce systems that remain confident and operational after losing the ability to self-invalidate.What is Reality Drift? — Short Introduction

A concise overview of the core idea and why modern life feels increasingly misaligned.Reality Drift — Systems-Level Misalignment (SSRN)

Paper describing how modern systems remain operational while gradually losing alignment with real-world feedback and lived experience.Optimization Trap — Why Systems Optimize the Wrong Things

How metrics, proxies, and incentives drive systems away from real-world outcomes.Constraint Collapse — Why Systems Keep Working After Losing Alignment

Why systems fail to self-correct even as meaning, feedback, and grounding degrade.Reality Drift Canonical Glossary — Core Concepts

Definitions of the key terms used throughout the framework.The Age of Drift — Book (2025)

A full exploration of the cultural and cognitive implications of Reality Drift.